The AI Selection Framework Most Leaders Get Backwards

When a founder or CEO tells me they need "AI," I don't ask about technology. I ask about the outcome they want to create, then have them prioritize: better, cheaper, or faster.

Most assume the answer determines which AI tool to buy. It doesn't. It determines whether they're ready for AI at all.

The Three Types of AI Most Organizations Conflate

AI Assistants, AI Agents, and AI-enabled Apps aren't variations of the same thing. They represent completely different interaction models with completely different risk profiles.

AI Assistants help you think—drafting emails, summarizing documents. Humans control every action.

AI Agents act on your behalf, executing multi-step workflows across systems with permissions and logging.

AI-enabled Apps embed AI behind structured interfaces—forms and buttons that produce consistent outputs at scale.

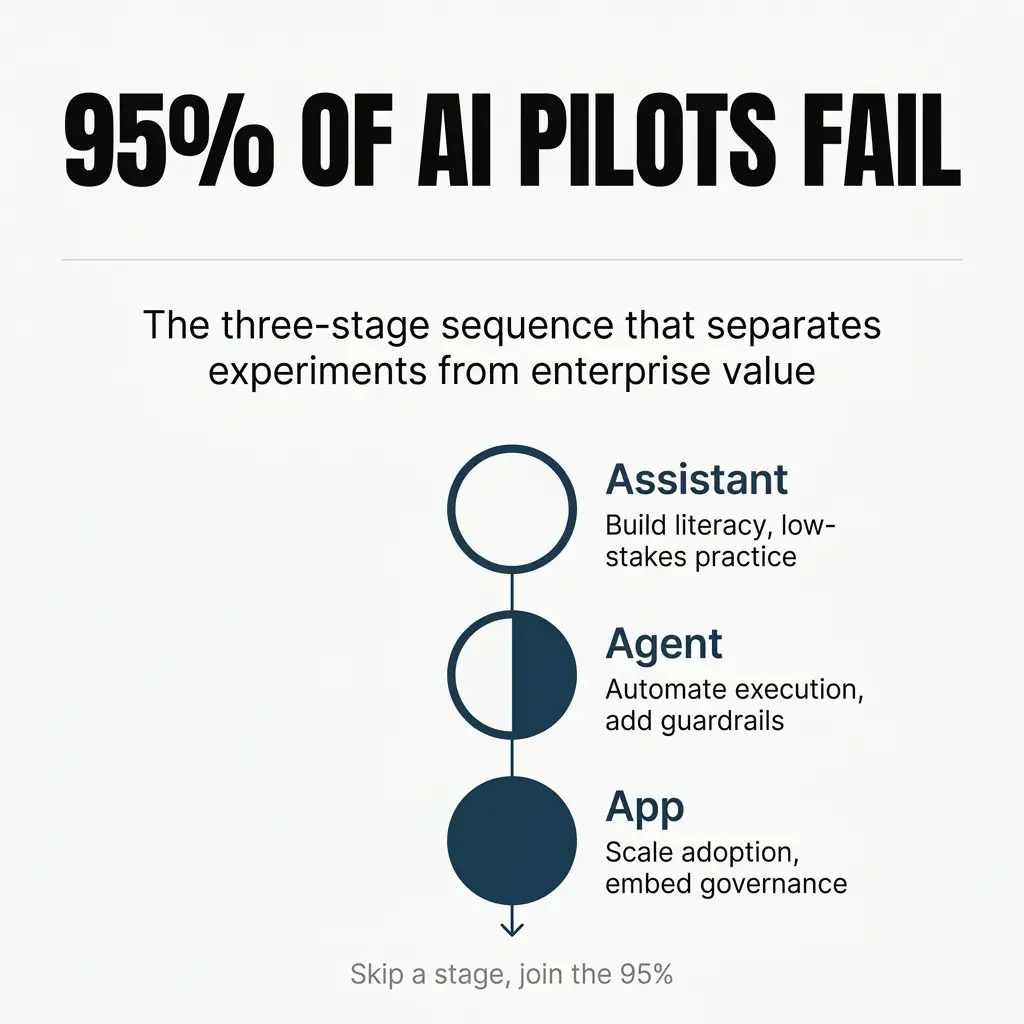

This distinction matters because only 5% of AI pilots achieve rapid revenue acceleration. The other 95% stall because leaders choose based on sophistication rather than diagnosing the nature of their work.

AI Maturity Determines What You Can Execute

I apply the better-cheaper-faster diagnostic to the outcome, then balance it against organizational AI maturity. Low maturity leans toward Assistants. Higher maturity enables Agents and Apps. Low maturity means AI exists as a point solution—drafting emails, creating tasks, summarizing meetings. Necessary but low-value work Comprehensive strategy looks different: AI supplants human time on complex tasks using sophisticated context and governance. You have a RAG strategy that works. You're measuring cycle time reduction, not productivity vibes.

The gap isn't incremental. 74% of companies cannot show tangible value from AI. The pattern I see: leaders try to skip the email-drafting phase and jump straight to complex automation.

Why Skipping the Assistant Phase Breaks Agent Deployment

The Assistant phase isn't a stepping stone to skip. It builds organizational literacy about how AI actually works. When companies skip this phase, I see two failure patterns:

For Agents: They source information from too many places, causing token inefficiency and hallucinations. 88% of AI agent projects fail before production, averaging $340,000 in direct costs.

For Apps: Lack of design strategy creates tech debt—incomplete apps, data security issues, sprawl without standards.

The underlying problem: leaders don't understand how AI makes decisions because they never worked with it on low-stakes tasks.

The Information Architecture Problem Nobody Audits

When I audit Agent instructions and RAG strategies, the pattern is an overcomplicated information architecture. Leaders point the Agent at everything, assuming more context produces better answers. More sources create confusion. The AI doesn't know where to look first. It hedges, guesses, and generates responses that sound confident but reference wrong data.

The fix isn't better prompts—it's auditing instructions to ensure the AI knows exactly where to look. You need clear boundaries, not comprehensive access.

Research on naive RAG implementations shows that low precision (misaligned chunks) and low recall (failure to retrieve relevant chunks) both lead to hallucinations.

The Work Diagnosis Framework That Actually Predicts Success

The choice between Assistants, Agents, and Apps depends on the nature of the work, not technology sophistication.

Messy, creative, or judgment-heavy work: Use an Assistant. Leadership memos, investor updates, strategic planning—these require human discernment at every step.

Repeatable work touching systems of record: Use an Agent with guardrails. Support triage, sales ops, finance intake—workflows with clear inputs, defined steps, and measurable outcomes.

Many people needing the same outcome: Build an AI-enabled App. Proposal generators, onboarding portals, compliance checkers—structured inputs with governance by design.

These aren't competing options. They're often a sequence.

The Sequential Deployment Pattern Mature Organizations Follow

The mature pattern: Assistant first, Agent second, App third.

Use the Assistant to learn the workflow. Understand where AI adds value and introduces risk. Build organizational literacy.

Automate execution with an Agent. Measure cycle time, throughput, error rates. Add approvals and logging to prevent expensive mistakes.

Scale adoption with an App. Embed AI into structured interfaces that many people can use without understanding how it works.

Organizations are now putting 11x more AI models into production compared to a year ago, improving the experimental-to-production ratio from 16:1 to 5:1.

But 45% of high-maturity organizations keep AI initiatives in production for three years or more. In low-maturity organizations, only 20% do.

The difference isn't the technology. It's following the sequence.

The Guardrail Architecture Most Agent Deployments Skip

When an AI Assistant generates a bad answer, you delete it. When an AI Agent takes a bad action—approves a refund, triggers a payment—the consequences are operational and financial.

Guardrails aren't optional, but most organizations skip them to speed deployment.

Adequate guardrail architecture includes:

Approvals for high-stakes actions. Agents draft; humans approve before execution.

Logging for every decision. An audit trail showing what the Agent evaluated and why.

Minimum necessary permissions. No access to systems beyond the workflow.

Safe failure handling. When encountering ambiguity, escalate to humans rather than guess.

42% of regulated enterprises plan to introduce manager features like approvals and review controls, compared to only 16% of unregulated enterprises.

The Adoption Paradox and When Apps Become Infrastructure

The most powerful AI often has the lowest organizational uptake.

Assistants are flexible but don't adapt to workflows. Every user starts from scratch.

Agents create compounding leverage but require stable processes. If workflows change frequently, Agents become fragile and expensive.

Apps solve adoption by embedding AI into structured interfaces. Users fill out forms, click buttons, get consistent outputs—no prompting required.

Apps become infrastructure, standardizing AI use and enforcing governance by design.

But Apps create prototype debt. You build a quick demo that works, scale without proper ownership, and six months later have 40 half-finished apps with no one knowing which are still used.

Treat Apps like products: assign ownership, set maintenance standards, retire what doesn't deliver value.

The Enterprise Value Signal Investors and Acquirers Recognize

Your AI choices signal strategic maturity to investors and acquirers.

Experimenting with Assistants after 18 months signals low literacy. Deploying Agents without guardrails signals operational immaturity. Forty ungoverned Apps signal tech debt accumulation.

They look for sequential deployment: Assistants to build literacy, Agents with guardrails, Apps with governance.

That pattern shows you capture value without accumulating risk—you execute, not just experiment.

High performers are three times more likely to have senior leaders demonstrate ownership of AI initiatives. Leadership championing is the primary differentiator for value capture.

The Diagnostic Question That Cuts Through the Noise

When deciding between AI Assistants, Agents, and Apps, ask:

Does this work require human judgment at every step, or can it be systematized?

If it requires judgment, use an Assistant. If it can be systematized, use an Agent with guardrails. If many need the same outcome, build an App.

The better-cheaper-faster diagnostic reveals desired outcomes. AI maturity determines execution capability. Work nature determines interaction type.

Most organizations choose based on what sounds innovative rather than diagnosing what their work requires.

The result: expensive experiments that never reach production, hallucinating Agents sourcing from too many places, and Apps accumulating as tech debt.

The path forward isn't more sophisticated AI. It's better diagnosis.

Start with the outcome. Assess maturity. Match the interaction type to the work nature. Follow the sequence. Build guardrails. Measure what matters.

That's how you move from experimentation to enterprise value.

Frequently Asked Questions

How do you know if my organization has low AI maturity or high AI maturity?

Low maturity means AI is used for point solutions—drafting emails, summarizing meetings, creating tasks. It's helpful but not strategic. High maturity means AI supplants human time on complex tasks with sophisticated context, governance, and measurement. You have a working RAG strategy and measure cycle time reduction, not productivity feelings. If you're still wondering whether AI is delivering value, you're low maturity.

Can you skip Assistants and go straight to building Agents or Apps?

You can, but the failure rate tells the story. 88% of Agent projects fail before production, averaging $340,000 in losses. The Assistant phase builds organizational literacy—how AI makes decisions, where it adds value, where it introduces risk. Skip that phase and you deploy Agents that source from too many places and hallucinate, or Apps that accumulate as ungoverned tech debt. The learning happens either way; the question is whether you pay for it on low-stakes tasks or expensive failures.

What's the biggest mistake leaders make when deploying AI Agents?

Pointing the Agent at too many information sources. Leaders assume more context produces better answers. It doesn't. More sources create confusion—the AI doesn't know where to look first, so it hedges and guesses. The fix is auditing instructions to ensure the Agent knows exactly where to look, with clear boundaries instead of comprehensive access. Naive RAG implementations fail on both precision and recall, leading directly to hallucinations.

How long should you stay in the Assistant phase before moving to Agents?

There's no fixed timeline. The signal is organizational literacy, not calendar time. You're ready for Agents when your team understands how AI makes decisions, can articulate where it adds value versus introduces risk, and has stable, repeatable processes worth automating. If leadership is still asking "is AI working?", you're not ready. When you're measuring specific outcomes and discussing tradeoffs, you've built sufficient literacy.

What does "adequate guardrails" actually mean for AI Agents?

Four components: (1) Approvals for high-stakes actions—Agents draft, humans approve before execution. (2) Logging for every decision—an audit trail showing what the Agent evaluated and why. (3) Minimum necessary permissions—no system access beyond the specific workflow. (4) Safe failure handling—when encountering ambiguity, escalate to humans rather than guess. 42% of regulated enterprises plan these controls, compared to only 16% of unregulated companies. That gap explains why Agent failures are so expensive.

When should you build an AI-enabled App instead of using an Agent?

When many people need the same outcome and you want governance by design. Apps embed AI behind structured interfaces—forms and buttons that produce consistent outputs without requiring users to understand prompting. They solve the adoption paradox: powerful AI with low uptake. But treat Apps like products—assign ownership, set maintenance standards, retire what doesn't deliver value. Otherwise you accumulate prototype debt: 40 half-finished apps with no one knowing which are still used.

How do investors and acquirers evaluate your AI strategy during diligence?

They look for sequential deployment patterns. Assistants to build literacy, Agents with guardrails, Apps with governance. That sequence signals you capture value without accumulating risk—you execute, not just experiment. Red flags: still experimenting with Assistants after 18 months (low literacy), deploying Agents without guardrails (operational immaturity), or having dozens of ungoverned Apps (tech debt accumulation). High performers have senior leadership demonstrating ownership of AI initiatives—that's the primary differentiator for value capture.

What's the one diagnostic question that determines which AI type you need?

Does this work require human judgment at every step, or can it be systematized? If it requires judgment (leadership memos, strategic planning, investor updates), use an Assistant. If it can be systematized with clear inputs and defined steps (support triage, sales ops, finance intake), use an Agent with guardrails. If many people need the same outcome (proposal generation, onboarding, compliance), build an App. Work nature determines interaction type, not technology sophistication.